The LNLS proposal evaluation process is based on a Distributed Double-Anonymization (DDA) system, consisting of peer review in which reviewers do not know the proposers and their institutes—and vice versa—and all proposers and principal investigators of proposals are potential reviewers. The evaluation process also includes proposals submitted by CNPEM researchers.

In addition to changes in the proposal format, the technical feasibility evaluation process will also be modified, becoming more active, continuous, and integrated into the submission and evaluation flow.

Technical feasibility analysis will begin at the moment of proposal submission, without the need to wait for the final call deadline. Beamline scientists will provide feedback on technical feasibility issues as quickly as possible, allowing proponents to clarify aspects of the experiment and, when feasible, adjust the proposal to suit beamline capabilities. This process creates a more dynamic interaction, enabling refinement of proposals to make them technically viable.

The proposal submission period runs from February 10 to March 8, 2026.

The interactive technical feasibility process, with the possibility of dialogue and adjustments, will occur from February 10 to March 22, 2026.

Between March 22 and 29, 2026, technical feasibility finalizations will be carried out, with no possibility of adjustments by proponents.

This new model aims to encourage proponents to submit their proposals before the final deadline (March 8, 2026), in order to allow more time for interaction with beamline teams and increase the chances that the proposed experiments are technically viable and well matched to Sirius infrastructure.

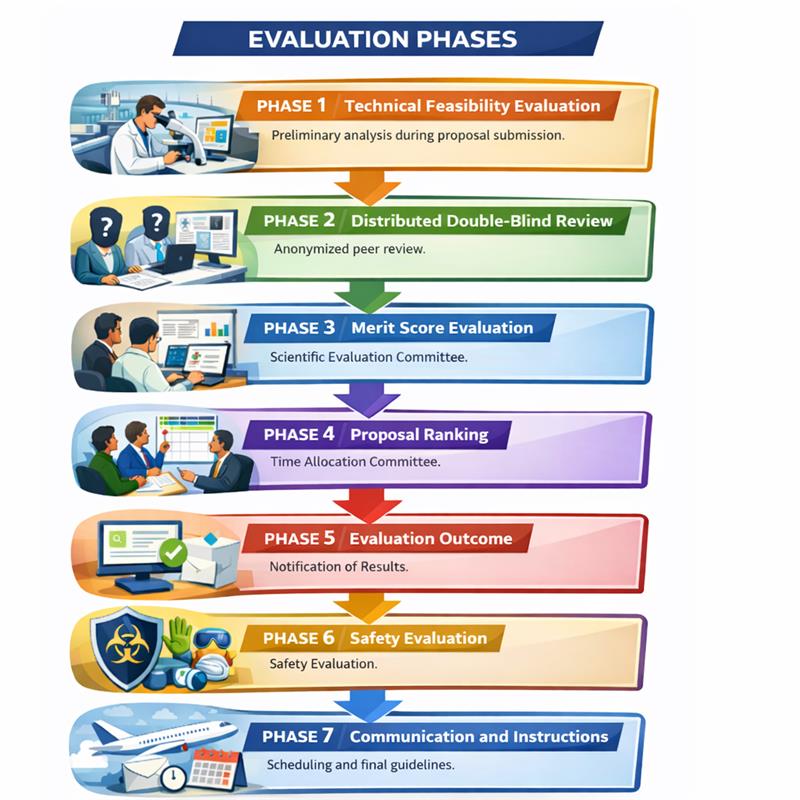

Phase 1 (Technical feasibility evaluation during the proposal submission period): Technical feasibility analysis will begin at the moment of proposal submission, without the need to wait for the final call deadline. Beamline scientists will provide feedback on technical feasibility issues as quickly as possible, allowing proponents to clarify aspects of the experiment and, when feasible, adjust the proposal to suit beamline capabilities. This process creates a more dynamic interaction, enabling refinement of proposals to make them technically viable.

Phase 2 (Distributed Double-Blind): Proposals previously anonymized on the SAU Online platform are distributed and evaluated by peers from the areas indicated when completing the submission form (area committee). The new-format evaluation seeks to prioritize clarity, objectivity, and adherence to the scientific method, allowing direct identification of: (i) the scientific hypotheses to be tested; (ii) whether the proposed experiment is suitable to test them; and (iii) the level of maturity of the study, based on prior characterizations already carried out.

Phase 3 (Merit score analysis): The Proposal Scientific Evaluation Committee (CACIP) analyzes and classifies competing proposals based on the scores received during Phase 2, may modify them, and prepares feedback texts to proponents. Particular attention is given to cases of major discrepancy between the received scores. CACIP will define the final score of proposals following the same criteria as Phase 1.

Phase 4 (Proposal ranking): Internal evaluation by the Beamline Time Allocation Committee, formed by LNLS management, which defines the final classification.

Phase 5 (Evaluation result): Proponents receive a notification through SAU Online informing them of the proposal evaluation result. However, the proposal will not yet be ready for scheduling execution dates, as sample safety evaluation will be required before advancing to the next stage.

Phase 6 (Safety): Internal evaluation by the Safety Committee of the highest-ranked proposals to ensure compliance with safety requirements. In case of doubts, the proponent receives a message from the SAU Online website and must provide all additional information promptly upon request.

Phase 7 (Communication and Instructions): Proponents receive a message from SAU Online with the scheduled period and instructions to prepare for arrival and execution of the proposal.

Reviewers are instructed to provide a detailed evaluation on the technical and scientific quality of the proposal, paying attention to the following items that will guide the report and the final grade:

Grades from 1 (lowest) to 5 (highest) will be assigned according to the guidelines below, and the overall grade will be the average between them.

Score and Criteria for evaluation of proposals:

Scoring for Question 1: What is the scientific relevance of the hypothesis to be tested?

5 – Exceptional scientific question, clear and high impact

The question is very well defined, specific, and directly testable, addressing a critical knowledge gap.

There is strong alignment between hypothesis, methodology, and experimental capabilities.

It has the potential to generate significant conceptual advances or a paradigm shift, with high impact in the field.

4 – Clear and relevant question with good impact potential

The question is well formulated and testable, targeting a relevant knowledge gap.

The proposal shows good coherence between the hypothesis and the experimental approach.

The results may significantly expand or refine current understanding, with consistent impact.

3 – Valid question, but incremental or partially developed

The question is reasonably defined but presents some ambiguity or limitations in formulation.

It represents a natural extension of existing studies, with predominantly incremental impact.

The results are likely to confirm or adjust current interpretations, with moderate advancement.

2 – Weak, poorly defined, or low-impact question

The question is vague, partially formulated, or difficult to test.

The scientific motivation is limited or weakly connected to the proposed hypothesis.

The potential for conceptual advancement is low, with limited impact.

1 – Absent or non-testable question

There is no clear scientific question or testable hypothesis.

The proposal is based on replication or characterization without proper justification.

It does not allow an objective evaluation of scientific merit or conceptual advancement.

Scoring for Question 2: Will the proposed experiment test the hypothesis?

5 – Ideal and conclusive experiment design

The experiment directly tests the hypothesis with clearly defined and unambiguous observables.

The technique is necessary, sufficient, and optimized for the scientific question.

Positive or negative results lead to clear and unambiguous conclusions.

4 – Robust and well-aligned design

The experiment directly addresses the hypothesis, with only minor limitations or interpretative dependencies.

The technique is appropriate and well justified.

The results allow solid conclusions, with low ambiguity.

3 – Adequate design, but with limitations

The experiment addresses the hypothesis indirectly or partially.

The observables may require additional interpretation or allow multiple interpretations.

The technique is relevant but not fully optimized for the question.

2 – Weak or partially disconnected design

The experiment only partially addresses the hypothesis, with an indirect link between observables and the scientific question.

There is significant ambiguity in the interpretation of results.

The chosen technique is not ideal or is poorly justified.

1 – Inadequate or non-conclusive design

Even with ideal data, the experiment would not answer the proposed hypothesis.

There is no clear relationship between observables, technique, and scientific question.

The design is generic or inappropriate, preventing meaningful conclusions.

Scoring for Question 3: Do the prior characterizations and sample preparation support the hypothesis and the proposed experiment?

5 – Fully appropriate and well-validated sample and characterization

The sample is clearly appropriate for the experiment and directly aligned with the hypothesis being tested.

The preparation is well described, reproducible, and compatible with the technical requirements.

Prior characterizations are comprehensive and unequivocally demonstrate that the sample has the required properties.

4 – Appropriate sample with good characterization

The sample is suitable for the experiment, with well-described and consistent preparation.

Prior characterizations are sufficient to support the proposal, although not fully comprehensive.

There is good confidence that the sample will allow reliable testing of the hypothesis.

3 – Possibly adequate sample, with limitations

The suitability of the sample is plausible but not fully demonstrated.

The preparation is partially described or contains relevant gaps.

Prior characterizations are limited and may introduce uncertainty in data interpretation.

2 – Inadequate or insufficiently characterized sample

The link between the sample and the hypothesis is not well established.

The preparation is poorly described or potentially unsuitable for the proposed technique.

Prior characterizations are insufficient to ensure reliable experimental outcomes.

1 – Inadequate or non-validated sample

The sample is not appropriate for the experiment or there is no evidence of its suitability.

The preparation is absent, inconsistent, or incompatible with the technique.

There is no relevant prior characterization to support the proposal, fully compromising the experiment’s validity.

Weighted score ranking

A weighting value applied to a reviewer’s score based on their knowledge and expertise in the area of a proposal being evaluated aims to communicate the confidence of the reviewer in the grade assigned during the review process. Even if the reviewer is not an expert in the scientific area of the proposal, this weighting will be applied as a correction factor, and the value of the final grade will be adjusted by the CACIP committee accordingly.

A drop-down menu next to the grade to be assigned in the review screen of the proposal brings values of 0.5, 1.0 and 1.5, where the reviewer at the time of the evaluation will assign the weighting value. The CACIP will then jointly evaluate the grade assigned to the proposal and the weighting value to prepare the final grade and comments to users. The table below summarizes the attribution of the weight of the familiarity between the expertise area of the reviewer and the research area of the proposal to be evaluated.

| Weight | Knowledge in the research area |

| 0.5 | I do not have enough knowledge in the area |

| 1.0 | I have enough knowledge in the area to produce a qualified review. |

| 1.5 | I am a specialist working in the area and I can produce a well-qualified review. |

The Committee for the Scientific Evaluation of Proposals (CACIP), composed of renowned researchers external to CNPEM and experienced in synchrotron use, will analyze the scientific merit of research proposals based on anonymous reviewer reports. The final score of each proposal will be based exclusively on the distribution of reviewer evaluations and the information provided in the proposals.

The composition of the CACIP with their respective affiliations, areas of activity in the committee, and terms of office are provided in the table below. In case of unavailability of any member for any reason, LNLS will appoint a substitute. Terms of office may be renewed.

| Members | Filiation | Country | CACIP Area | Mandate |

|---|---|---|---|---|

| Marcelo Raul Ceolin | INIFTA | Argentina | Chemistry | 2022-24 |

| Maria Luiza Rocco | UFRJ | Brazil | Chemistry | 2022-24 |

| Watson Loh | UNICAMP | Brazil | Chemistry | 2022-24 |

| Alexandre Malta Rossi | CBPF | Brazil | Sustainability and Earth Sciences/Life Sciences | 2023-25 |

| Regina Cely Rodrigues Barroso | UERJ | Brazil | Sustainability and Earth Sciences/Life Sciences | 2022-24 |

| Teógenes Senna De Oliveira | UFV | Brazil | Sustainability and Earth Sciences/Life Sciences | 2022-24 |

| Wânia Duleba | USP | Brazil | Sustainability and Earth Sciences/Life Sciences | 2022-24 |

| Altair Soria Pereira | UFRGS | Brazil | Physics/Engineering | 2022-24 |

| Jonder Morais | UFRGS | Brazil | Physics/Engineering | 2022-24 |

| Paulo de Tarso | UFC | Brazil | Physics/Engineering | 2023-25 |

| Alejandro Pedro Ayala | UFC | Brazil | Physics/Engineering | 2024-26 |

| Abner de Siervo | UNICAMP | Brazil | Physics/Engineering | 2024-26 |

Criteria evaluated by the LNLS Allocation Committee (Stage 4 of the proposal flowchart):

Every proposal must be written in the third person so as not to intentionally identify the candidates. Below are some tips to help conceal the candidate’s identity and ensure a fairer proposal evaluation process:

Please contact the beamline teams and heads of LNLS scientific divisions to discuss your ideas (). For questions related to proposal submission guidelines, contact the User Office (EdU – edu@cnpem.br).